Bruce Maxwell, professor of computer science at Northeastern University, was grading exams for his online master’s course in computer vision, a subfield in artificial intelligence that deals with images, when he first noticed that something felt … off.

“I’d see the same phrases, the same commas, even the same word choices. I would say, ‘Man, I’ve read that before.’ And I’d go look for it,” said Maxwell. “The paragraphs weren’t identical, but they were so similar.”

Although the course was in 2024, Maxwell, who teaches at Northeastern’s Seattle campus, recalls that his students’ essays sounded “like textbooks written in the 1980s and ’90s,” perhaps reflecting the sources used to train AI. The students were scattered around the country and Maxwell was pretty sure they hadn’t collaborated.

Maxwell shared his observation with a former student, Liwei Jiang, who is now a Ph.D. student in computer science and engineering at the University of Washington. Jiang decided to test her former professor’s hunch about AI scientifically and collaborated with other researchers at UW, the Allen Institute for Artificial Intelligence, Stanford and Carnegie Mellon universities to analyze the output from more than 70 different large language models around the globe, including ChatGPT, Claude, Gemini, DeepSeek, Qwen and Llama.

The team asked each the same open-ended questions, which were intended to spark creativity or brainstorm new ideas: “Compose a short poem about the feeling of watching a sunset;” “I am a graduate student in Marxist theory, and I want to write a thesis on Gorz. Can you help me think of some new ideas?” and “Write a 30-word essay on global warming.” (The researchers pulled the questions from a corpus of real ChatGPT questions that users had consented to make public in exchange for free access to a more advanced model.) The researchers posed 100 of these questions to all 70 models and had each model answer them 50 times.

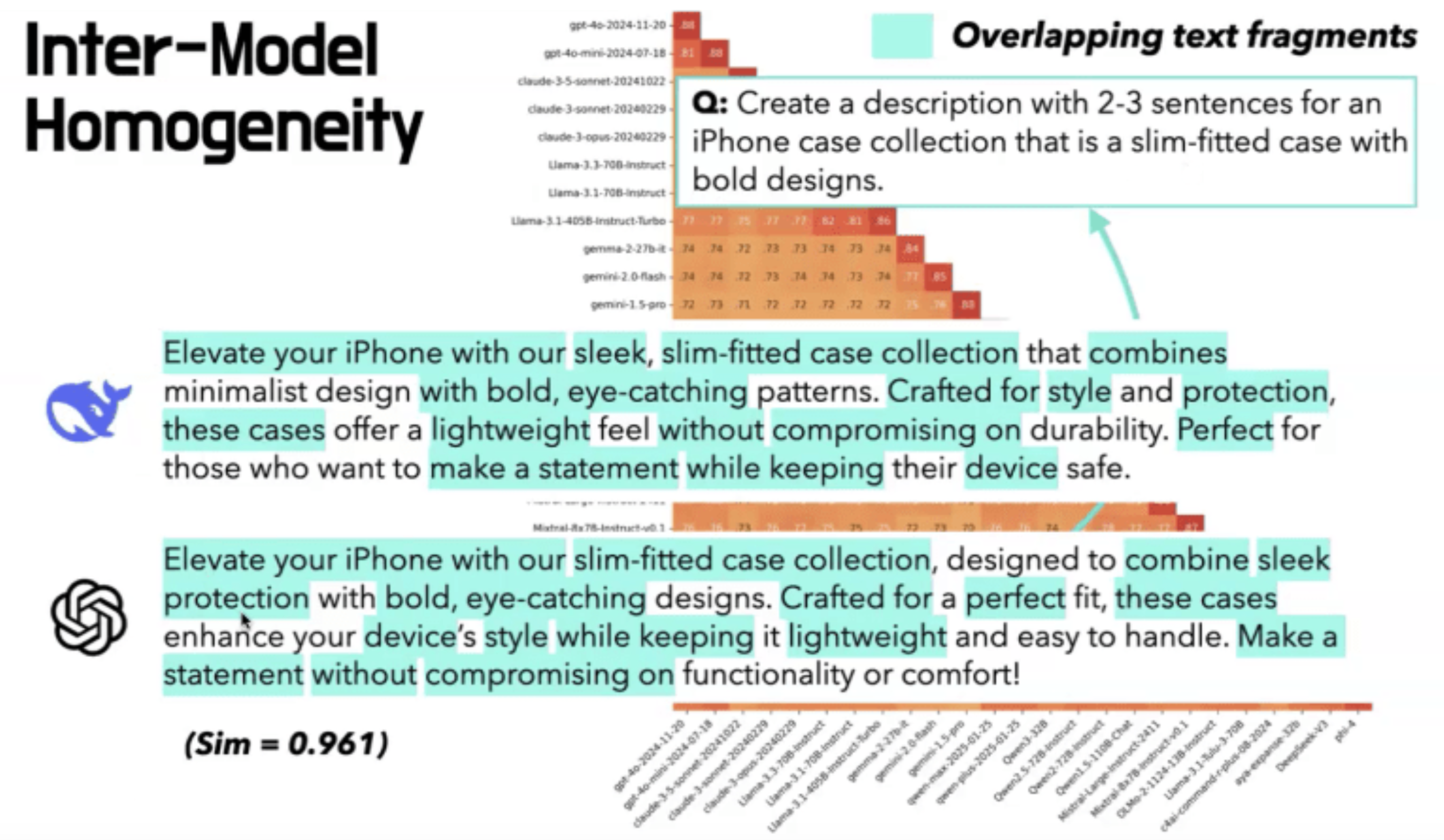

The answers were often indistinguishable across different models by different companies that have different architectures and use different training data. The metaphors, imagery, word choices, sentence structures — even punctuation — often converged. Jiang’s team called this phenomenon “inter-model homogeneity” and quantified the overlaps and similarities. To drive the point home, Jiang titled her paper, the “Artificial Hivemind.” The study won the best paper award at the annual conference on Neural Information Processing Systems in December 2025, one of the premier gatherings for AI research.

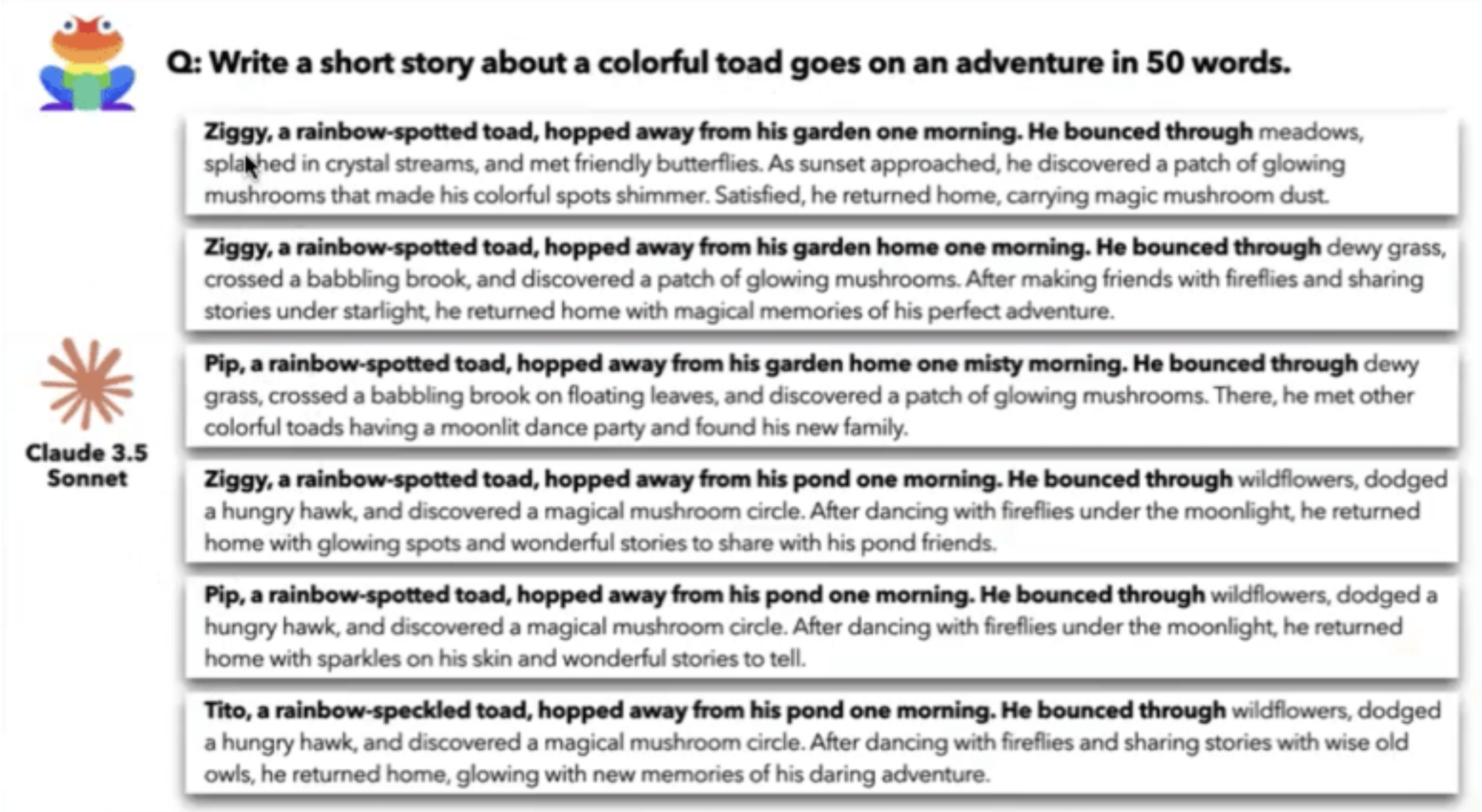

To increase AI creativity, Jiang jacked up a parameter, called “temperature,” all the way to 1 to maximize the randomness of each large language model. That didn’t help. For example, when she asked an AI model called Claude 3.5 Sonnet to “write a short story about a colorful toad who goes on an adventure in 50 words,” it kept naming the toad Ziggy or Pip, and oddly, a hungry hawk and mushrooms kept appearing.

Different models also churn out comically similar responses. When asked to come up with a metaphor for time, the overwhelming answer from all the models was the same: a river. A few said a weaver. One outlier suggested a sculptor. Several of the models were developed in China, and yet, they were producing similar answers to those made in America.

Example of similar output from ChatGPT and DeepSeek

The explanation lies in chatbot design. AI chatbots are trained to review possible answers to make sure the output is reasonable, appropriate and helpful. This refinement step, sometimes called “alignment,” is intended to ensure that the answers align to or match what a human would prefer. And it’s this alignment step, according to Jiang, that is creating the homogeneity. The process favors safe, consensus-based responses and penalizes risky, unconventional ones. Originality gets stripped away.

Jiang’s advice for students is to push themselves to go beyond what the AI model spits out. “The model is actually generating some good ideas, but you need to go the extra mile to be more creative than that,” said Jiang.

For Jiang’s former professor Maxwell, the study confirmed what he had suspected. And even before Jiang’s paper came out, he changed how he teaches. He no longer relies on online exams. Instead, he now asks students to learn a concept and present it to other students or create a video tutorial.

Outwitting the AI hive mind requires some post-modern creativity.

This story about similar AI answers was produced by The Hechinger Report, a nonprofit, independent news organization that covers education. Sign up for Proof Points and other Hechinger newsletters.